Win Loss Analysis: Why You're Probably Doing It Wrong

We did our first formal win loss analysis at a company I worked at in 2022. We'd been in business for three years. Three years of winning and losing deals without ever systematically asking why. When we finally looked at the data, we discovered something uncomfortable: the reason we thought we were losing deals was completely wrong.

We assumed we lost on price. Our reps said we lost on price. Our CRM's "loss reason" dropdown had "price" selected on about 60% of closed-lost deals. But when we actually talked to the buyers who chose competitors, only 15% mentioned price as the primary factor. The real reasons? Slow implementation timeline. Confusing onboarding. A missing integration that three competitors already had.

Our reps were wrong about why we lost because nobody asked the buyers. The reps guessed, and they guessed the least embarrassing answer. That's the core problem with most win loss analysis — it relies on internal assumptions instead of external data.

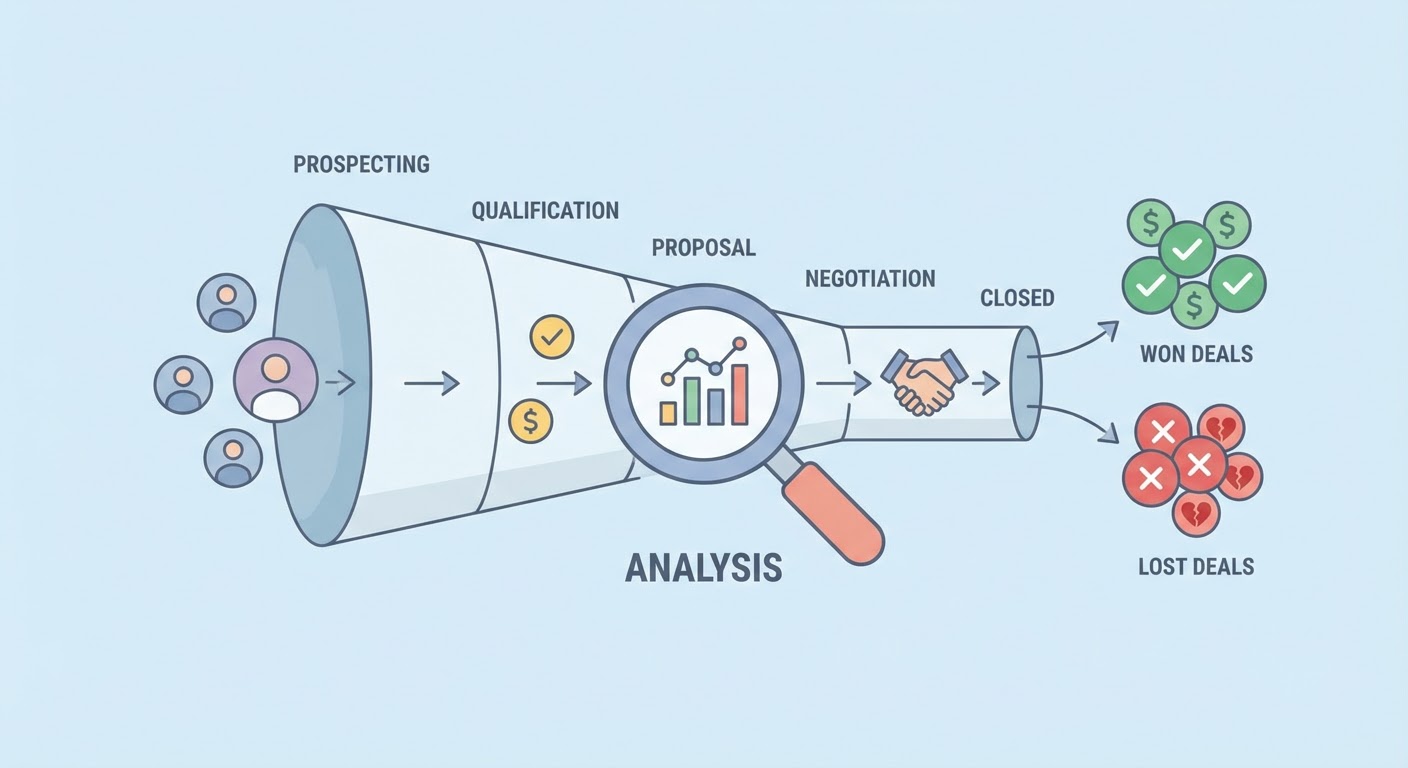

What Win Loss Analysis Actually Is

Win loss analysis is talking to buyers after a deal closes (won or lost) and asking them why they made the decision they made. Not why you think they decided. Not what your CRM says. What the buyer actually experienced during the evaluation.

There are two versions of this. The lightweight version uses internal data — CRM notes, rep debriefs, deal review conversations. It's better than nothing but biased toward what your team believes rather than what buyers experienced. The heavyweight version interviews the actual buyers. It's harder to do, awkward to request, and worth ten times more.

You need both, but if you only do one, do the buyer interviews. Five buyer interviews will teach you more about your competitive position than a year of internal deal reviews.

How to Get Buyers to Talk to You

This is the part everyone overthinks. "Buyers won't take a post-deal call." "It'll be awkward asking someone who rejected us why they rejected us." "Legal won't let us."

I've run or commissioned maybe 200 win loss interviews across different companies. The response rate for lost deals hovers around 30-40% when you do it right. That's not great, but it's not zero. Here's what works.

Have someone other than the rep make the request. The buyer said no to your product. The rep is the face of that rejection. A different person — product marketing, customer research, even a third party — gets a higher response rate because the conversation feels less like a sales retry.

Make the ask within two weeks of the decision. Every day you wait, the buyer's memory fades and their willingness to help drops. Two weeks is the sweet spot. More than a month and your response rate falls off a cliff.

Frame it as learning, not selling. "We want to understand our market better" works. "Can you tell us how to improve?" works. "Let me address the concerns you had" doesn't work — that's just a thinly disguised sales call.

Keep it short. Fifteen minutes. Tell them fifteen minutes and mean it. Going over is the fastest way to destroy your reputation for future interviews.

The Questions That Actually Reveal Something

Most win loss interview guides have 15-20 questions. You don't need that many. You need five that go deep.

"Walk me through how you evaluated solutions." This reveals the buyer's process, which tells you more about their priorities than any direct question. Listen for which criteria they mention first, which vendors they evaluated, and what their internal decision process looked like.

"What were the top two or three factors in your final decision?" Not the top five. Two or three. Force them to prioritize. The things that don't make the top three didn't actually matter, even if they discussed them during the sales process.

"Where did we fall short compared to the option you chose?" This is the uncomfortable one. Let them answer without defending yourself. Take notes. Resist the urge to explain. The moment you start justifying, the interview becomes a debate and you stop learning.

"Was there anything we could have done differently during the process that would have changed the outcome?" This question separates product issues from sales process issues. Sometimes you lose because the product isn't right. Sometimes you lose because the demo was bad or the rep didn't follow up or your pricing was confusing. Those are fixable without changing the product.

"What's one thing you wish [competitor they chose] did better?" This is a gift. It tells you the weakness of the company that beat you. Use this for competitive positioning.

Finding Patterns, Not Anecdotes

One interview is a story. Ten interviews reveal a pattern. The value of win loss analysis comes from aggregation, not from any individual conversation.

After every interview, tag it with categories: loss reason (product gap, pricing, sales process, timing, incumbent advantage), competitor mentioned, deal size, buyer persona. After you have 15-20 interviews, pull up the tags and look for clusters.

If 60% of your losses against Competitor B mention "faster implementation," that's a pattern. If you're losing enterprise deals for different reasons than SMB deals, that's a pattern. If your win rate against a specific competitor changed over the last two quarters, that's a pattern worth investigating.

A G2 review win loss analyzer can accelerate the pattern-finding. It pulls structured data from G2 reviews where buyers explicitly compare your product to alternatives. It's not a replacement for direct interviews, but it adds volume to your dataset and catches themes you might miss in a small interview sample.

Turning Analysis Into Action

The output of win loss analysis should be a list of changes, not a report nobody reads. Every pattern you identify should connect to an action someone owns.

Product gap showing up in 40% of losses? File it with product and attach the buyer quotes. Sales process issue? Change the playbook and train the team. Positioning problem? Rewrite the competitive battlecard. Pricing confusion? Simplify the pricing page.

Track the changes and measure whether they affect win rates in subsequent quarters. If you fixed the implementation speed concern and your win rate against Competitor B improves, the analysis paid for itself. If it doesn't improve, the diagnosis was wrong and you need to dig deeper.

The companies that get the most value from win loss analysis do it continuously, not as a one-time project. Five interviews per month, tagged and categorized, with a quarterly review of patterns. That rhythm catches shifts in competitive dynamics within a quarter instead of discovering them after a year of declining win rates.

Why Use an Agent for This

The G2 review win loss analyzer mines review data for win/loss signals at a scale you can't do manually. Hundreds of reviews tagged with competitor comparisons, strengths, weaknesses, and switching reasons. It gives you the quantitative layer that interview data alone can't provide.

The Gong competitive intel tracker pulls competitive mentions from recorded calls. Instead of asking reps to remember what competitors came up, it scans actual conversations and extracts what was said. The difference between what reps report and what actually happened in calls is often surprising.

The call insights analyzer goes deeper on individual conversations, identifying patterns in how deals progress differently when specific competitors are mentioned. If deals that mention Competitor C tend to stall at the technical evaluation stage, that's intelligence your reps can use immediately.

Stop guessing why you lose deals. Ask the people who decided.

Try These Agents

- G2 Review Win Loss Analyzer — Review-based competitive win/loss intelligence

- Gong Competitive Intel Tracker — Competitive mentions extracted from sales calls

- Call Insights Analyzer — Pattern analysis across deal conversations

- Competitor Review Analysis — Deep dive into competitor review sentiment